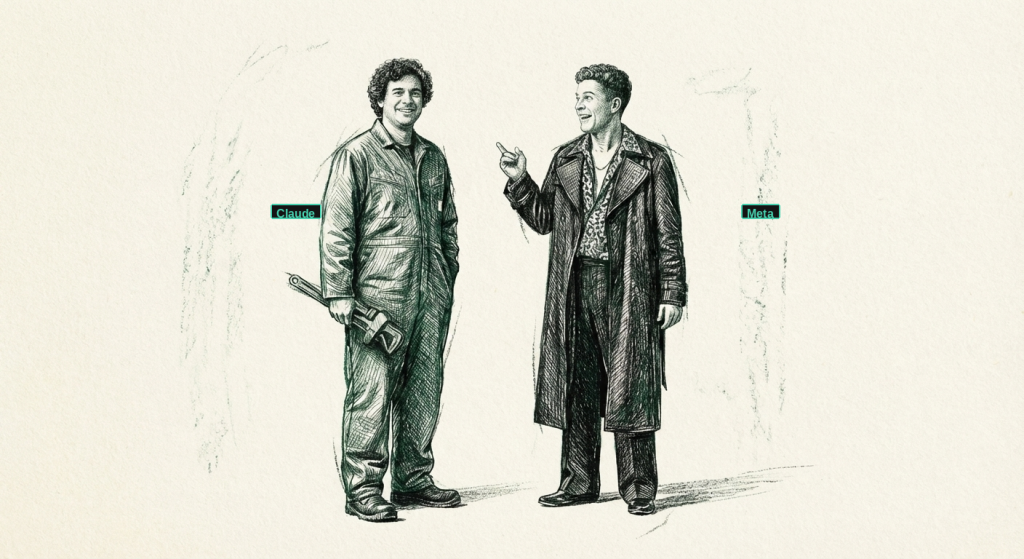

Anthropic Built the Plumbing. Meta Built the Cash Register.

One company shipped four enterprise products in a week managed agents, desktop AI, cybersecurity scanning, and intelligent model routing. The other revealed it was never competing in the same race.

THE NUMBER: $0.08 — the cost per session hour for an autonomous AI agent that can work for hours without human intervention. Eight cents for the orchestration layer. But here’s the business model that matters: the real revenue is the inference underneath. Every agent session burns tokens — Opus tokens, Sonnet tokens, Haiku tokens — and Anthropic collects on every one. The $0.08 isn’t the price. It’s the on-ramp. Anthropic just built the cheapest toll road in enterprise software, and every car on it burns their fuel.

Yesterday we wrote that the AI house needed plumbing. Then Anthropic showed up with the truck, the crew, and the full set of blueprints.

In one product cycle, Anthropic shipped Managed Agents — autonomous AI that runs for hours on enterprise tasks at eight cents per session hour. They pushed Cowork to general availability on macOS and Windows for every paid subscriber, complete with role-based access controls, group spend limits, and usage analytics. They unveiled Claude Mythos, a cybersecurity frontier model so capable they won’t release it publicly — it found a 27-year-old TCP flaw in OpenBSD and 181 zero-day exploits in Firefox where their previous best model found two. And they wrapped it all in Project Glasswing, a defensive coalition with Apple, AWS, Google, Microsoft, Nvidia, JPMorgan, and Cisco writing the checks.

🦞 This isn’t a product launch. It’s an enterprise stack. Managed Agents handles orchestration. Cowork handles the desktop. Mythos handles security surface scanning. Model routing handles cost — switching between Mythos, Opus, Sonnet, and Haiku depending on task complexity so companies aren’t burning Opus-level tokens on Haiku-level work. Nobody else has shipped all four layers simultaneously.

Meanwhile, Meta dropped Muse Spark from its Superintelligence Labs, and every benchmark-obsessed commentator missed the point entirely. Muse Spark ties for fourth on broad AI reasoning tests. It’s “absolute rubbish,” as one observer put it, if you’re measuring it against Claude or GPT. But Mark Zuckerberg doesn’t care about AGI. He cares about selling you stuff.

💲 Muse Spark is an entity recognition engine trained on 3.5 billion users’ behavioral data with no opt-out outside the EU. It recognizes objects, understands context, and points users toward purchasable products through Instagram’s one-tap checkout. The AI conversations themselves become ad targeting signals. It’s not a frontier model competitor. It’s the most sophisticated commerce engine ever built, wearing a chatbot’s skin. The only question is whether people keep showing up — because AI slop in the feed doesn’t compete with content from real friends. It competes with the platform’s reason to exist.

Tomasz Tunguz dropped a framework this week that makes the split precise. His AI Problem Matrix maps work along two axes: infinite versus finite demand, and closed versus open loops. Anthropic is building the infrastructure for the quadrant where demand never stops and AI can verify its own output — the economic engine. Meta thinks it’s in the same quadrant, but its “closed loop” depends on human engagement, and that’s the variable nobody’s stress-testing. Two companies. Two completely different theories of what AI is for. One bet has a test suite. The other has a feed algorithm.

Anthropic Ships the Enterprise AI Stack Nobody Else Has Built

🦞 Anthropic didn’t just announce a product this week. They announced a thesis: that the company which controls agents, security, desktop access, and cost routing wins the enterprise.

Start with Managed Agents, now generally available. These aren’t chatbot wrappers — they’re autonomous systems that run for hours on complex tasks, return persistent results, and cost $0.08 per session hour plus standard token rates. Notion is using them for workspace delegation. Rakuten built enterprise agents across Slack and Teams. Sentry deployed them for debugging with automated patch generation. Anthropic claims the time from prototype to production drops by 10x.

Then there’s Cowork — the desktop agent that went GA for all paid subscribers on macOS and Windows. This is the piece most people underestimated. Enterprise role-based access controls, group spend limits, usage analytics, and an OpenTelemetry integration that lets IT departments actually monitor what the AI is doing. It’s not a toy anymore. It’s a managed endpoint.

But the real flex is Claude Mythos and Project Glasswing. Mythos scored 83.1% on Cybergym versus 66.6% for Opus 4.6 — a generational leap in cybersecurity capability. It found thousands of zero-day vulnerabilities across every major OS and browser. A 27-year-old TCP flaw in OpenBSD. A 16-year-old H.264 bug in FFmpeg. 181 Firefox exploits where Opus found two. Human reviewers agreed with its severity ratings 89% of the time. Anthropic deemed it too powerful to release publicly, restricting access to vetted partners within the Glasswing coalition — which includes Apple, AWS, Google, Microsoft, Nvidia, Cisco, JPMorgan, and Palo Alto Networks, backed by $100 million in usage credits.

And underneath all of it: intelligent model routing that switches between Mythos, Opus, Sonnet, and Haiku based on task complexity. This is the part that matters for CFOs. You’re not paying frontier-model prices for routine classification tasks. The system drops to cheaper models when it can and escalates when it must. Enterprise cost control baked into the architecture.

Tomasz Tunguz published a framework this week that explains why this stack matters more than any benchmark. His AI Problem Matrix separates work along two axes: infinite versus finite demand, and closed versus open loops. The quadrant that prints money is Closed Loop + Infinite Demand — where AI can verify its own work and more output always creates more value. Software engineering lives there. GitHub is on pace for 14 billion commits this year, up from 1 billion in 2025. Demand is infinite, and tests close the loop.

Every piece of Anthropic’s stack targets that quadrant. Managed Agents run autonomous coding and debugging workflows where output is verifiable. Mythos scans security surfaces against known vulnerability databases — closed loop. Model routing optimizes cost across infinite inference volume. They’re not selling a chatbot. They’re selling the infrastructure for the only quadrant where AI compounds without human gatekeeping.

The action item: If you’re evaluating AI vendors this quarter, stop comparing model benchmarks. Compare stacks. Ask who handles agent orchestration, who gives you desktop deployment with IT controls, who’s scanning your security surface, and who lets you route between models based on cost. Right now, one company has answers to all four questions. The rest are still selling chatbots.

Meta’s Muse Spark Isn’t an AI Model. It’s a Commerce Engine.

💲 Meta Superintelligence Labs shipped its first model this week, and the tech press tripped over itself benchmarking Muse Spark against frontier competitors. That’s like benchmarking a vending machine against a Michelin-star kitchen. They’re not in the same business.

Muse Spark is a multimodal reasoning model that handles voice, text, and image inputs. It ties for fourth on the Artificial Analysis broad AI test index. It’s competitive with Opus 4.6 on reasoning but lags on coding and ARC-AGI 2. By frontier standards, it’s fine. Not special. Alexandr Wang’s team — the former Scale AI CEO whom Meta hired as chief AI officer — rebuilt Meta’s AI stack from scratch in nine months. That’s impressive engineering. But the engineering isn’t the story.

The story is what Muse Spark is designed to do. It recognizes objects in photos, understands context from conversations, and routes users toward purchasable products. Point your Ray-Ban glasses at a pair of shoes, and Muse Spark identifies them, finds similar options, and pushes you toward Instagram’s one-tap checkout. Take a photo of your dinner, and it provides nutritional analysis — while noting which ingredients are available for delivery. The AI conversations themselves become targeting signals. Every question you ask, every image you share, every preference you reveal feeds the commerce engine.

Meta spent $72 billion on AI infrastructure in 2025. They didn’t spend it to win reasoning benchmarks. They spent it because they have 3.5 billion users across Instagram, WhatsApp, Facebook, and now the Ray-Ban platform, and they need those users buying things. Sam Altman claimed Meta offered $100 million signing bonuses to poach talent. That’s not the behavior of a company chasing AGI. That’s the behavior of a company building the most sophisticated advertising and commerce platform in human history.

But here’s the question nobody in Menlo Park wants to answer: do people still show up? In the age of AI-generated content, engagement is the assumption the entire thesis rests on — and it’s the assumption most worth questioning. AI slop doesn’t compete with photos from real friends. It competes with the feed’s credibility. They’ve sold millions of Ray-Ban smart glasses, sure. But they reach billions daily through screens, and if those billions start scrolling less because the feed feels synthetic, the commerce engine loses its foot traffic. Meta’s AI play requires Meta’s platforms to still matter. That’s not a given anymore.

Apply Tunguz’s matrix here and the picture sharpens. Meta thinks it’s building in the Closed Loop + Infinite Demand quadrant — conversion metrics close the loop, and there’s always more stuff to sell. But the “infinite demand” assumption rests on infinite engagement, and that’s the part that’s breaking. If AI-generated content degrades the feed, engagement drops, the commerce engine loses its audience, and the loop opens. Anthropic’s closed loops are verified by test suites and vulnerability databases. Meta’s closed loop is verified by human attention — and human attention is the one resource AI can’t manufacture.

It’s human manipulation on steroids. The open question is whether there’s anyone left in the store.

Why this matters: Don’t benchmark Meta against Anthropic or OpenAI. They’re playing a different sport. If you’re a consumer brand, stop worrying about whether ChatGPT or Perplexity indexes your website. Start worrying about whether your products exist in Meta’s visual intent database — the entity recognition layer that decides what Muse Spark surfaces when 3.5 billion users point a camera or ask a question. If you’re building enterprise AI strategy, Meta isn’t your vendor. They’re your distribution channel’s new landlord, and the rent just got algorithmic.

What This Means For You

The AI industry just split into two completely different businesses, and the companies that don’t recognize the fork are going to pick the wrong vendors, deploy the wrong tools, and wonder why their competitors pulled ahead.

Stop comparing models. Start comparing stacks. The benchmark wars are a distraction. Anthropic didn’t win this week by having better MMLU scores. They won by shipping the four things enterprise buyers actually need: agent orchestration, desktop deployment, security scanning, and cost routing. If your vendor can’t answer all four, you’re buying components when you need a platform.

Get your products into Meta’s visual intent database. Everyone’s tripping over themselves to get indexed by ChatGPT and Perplexity. Meanwhile, Meta just built an entity recognition engine that decides what 3.5 billion users see when they point a camera or ask a question. If your product isn’t in that database, it doesn’t exist on the world’s largest commerce surface. Stop optimizing for search. Start optimizing for visual intent.

The offense play is hiring agent operators, not cutting headcount. Managed Agents at eight cents an hour doesn’t mean you fire your team. It means one person orchestrating agents produces the output of ten. The companies that scale fastest will be the ones that hire five people who each run a swarm — turning a fifty-person company into a five-hundred-person company in output.

Tunguz’s matrix isn’t just a framework — it’s a vendor selection tool. Put every AI investment you’re considering into one of his four quadrants. If it’s in Closed Loop + Infinite Demand, move fast. If the “closed loop” depends on human attention rather than automated verification, stress-test the engagement assumption before you write the check. The AI industry just forked. One side runs on test suites. The other runs on dopamine. Choose accordingly.

Three Questions We Think You Should Be Asking Yourself

If Anthropic is building the enterprise stack, what happens to every AI startup that only built one layer? The agent orchestration companies, the AI security startups, the cost-optimization wrappers — they all just got Salesforce’d. When the platform vendor ships native solutions for agents, security, and routing, point solutions have about eighteen months before the oxygen gets thin. Check your portfolio.

What does your company look like when the smartest salesperson on earth works for your competitor’s distribution channel? Meta’s commerce engine doesn’t care about your brand strategy. It surfaces products based on behavioral prediction across 3.5 billion users. If you’re not optimizing for AI-mediated discovery, you’re optimizing for a shelf that’s about to get rearranged without your consent.

Are you hiring people who can define and manage work — or just people who do it? The Managed Agents pricing makes the math unavoidable. At eight cents an hour versus forty-five dollars for a knowledge worker, the skill premium shifts from execution to orchestration. The most valuable hire at your company next year might not be the best engineer or analyst. It might be the person who can direct a fleet of agents and know when the output is wrong.

“Every feature must justify its existence in the eyes of the consumer.”

— Tony Fadell

Anthropic just shipped four features that justify themselves. Everyone else is still shipping demos.

— Harry and Anthony

Sources:

- Anthropic Managed Agents announcement

- Claude Mythos and Project Glasswing introduction

- Meta Superintelligence Labs / Muse Spark launch

- Anthropic Cowork general availability

- Coatue Anthropic valuation projections

- Meta AI infrastructure spending ($72B capex)

- Project Glasswing coalition partners and $100M credits

- Tomasz Tunguz — The AI Problem Matrix

- GitHub: 14 billion commits pace, 2.1B Actions minutes/week

Past Briefings

Emmet’s Roof

THE NUMBER: 222 — the years between the morning of July 11, 1804, when Aaron Burr shot Alexander Hamilton on the dueling grounds at Weehawken, and the May afternoon I sat in a stripped conference room on the 32nd floor of 120 Broadway signing a loan refinancing with a wet pen, a marble bust watching me from a shelf in the corner. Hamilton's chair — most influential lawyer in New York, founder of The Bank of New York, architect of the United States financial system — stayed empty for five months after the duel. In November 1804 an exiled Irish...

May 21, 2026Musical Chairs

THE NUMBER: $1.25 billion — Anthropic's rent line to xAI for compute. Per month. The detail emerged today inside SpaceX's S-1 filing with the SEC, ahead of the company's June 12 IPO. Anthropic will pay $1.25B every month through May 2029 for the entire 300-megawatt output of Colossus 1 in Memphis — roughly $40 billion in total commitments. Either side can terminate on 90 days' notice. xAI overbuilt; Grok usage has dropped; Anthropic absorbs the slack. That's the rent line for one (1) frontier lab. Not the salary line. Not the talent line. Not the chip purchase. Rent. It is,...

May 20, 2026The Right Stuff

Chuck Yeager broke Mach 1 with two cracked ribs and a sawed-off broom handle taped to his right arm. Andrej Karpathy quit a running company on Tuesday morning to take an individual-contributor research role. Six CTOs went before him. Anthropic's Mercury Seven is complete. The only question left is whether you have it too. THE NUMBER: 7 — the Mercury Seven, announced by NASA on April 9, 1959, after a brutal six-month winnowing of 110 of America's best military test pilots. Scott Carpenter, Gordon Cooper, John Glenn, Gus Grissom, Wally Schirra, Alan Shepard, Deke Slayton — seven names that became...